This is the multi-page printable view of this section. Click here to print.

Documentation

- 1: Getting started

- 1.1: Introduction to Kubernetes operators

- 1.2: Bootstrapping and samples

- 1.3: Patterns and best practices

- 2: Documentation

- 2.1: Implementing a reconciler

- 2.2: Error handling and retries

- 2.3: Event sources and related topics

- 2.4: Working with EventSource caches

- 2.5: Configurations

- 2.6: Observability

- 2.7: Other Features

- 2.8: Dependent resources and workflows

- 2.8.1: Dependent resources

- 2.8.2: Workflows

- 2.9: Architecture and Internals

- 3: Integration Test Index

- 4: FAQ

- 5: Glossary

- 6: Contributing

- 7: Migrations

1 - Getting started

1.1 - Introduction to Kubernetes operators

What are Kubernetes Operators?

Kubernetes operators are software extensions that manage both cluster and non-cluster resources on behalf of Kubernetes. The Java Operator SDK (JOSDK) makes it easy to implement Kubernetes operators in Java, with APIs designed to feel natural to Java developers and framework handling of common problems so you can focus on your business logic.

Why Use Java Operator SDK?

JOSDK provides several key advantages:

- Java-native APIs that feel familiar to Java developers

- Automatic handling of common operator challenges (caching, event handling, retries)

- Production-ready features like observability, metrics, and error handling

- Simplified development so you can focus on business logic instead of Kubernetes complexities

Learning Resources

Getting Started

- Introduction to Kubernetes operators - Core concepts explained

- Implementing Kubernetes Operators in Java - Introduction talk

- Kubernetes operator pattern documentation - Official Kubernetes docs

Deep Dives

- Problems JOSDK solves - Technical deep dive

- Why Java operators make sense - Java in cloud-native infrastructure

- Building a Kubernetes operator SDK for Java - Framework design principles

Tutorials

- Writing Kubernetes operators using JOSDK - Step-by-step blog series

1.2 - Bootstrapping and samples

Creating a New Operator Project

Using the Maven Plugin

The simplest way to start a new operator project is using the provided Maven plugin, which generates a complete project skeleton:

mvn io.javaoperatorsdk:bootstrapper:[version]:create \

-DprojectGroupId=org.acme \

-DprojectArtifactId=getting-started

This command creates a new Maven project with:

- A basic operator implementation

- Maven configuration with required dependencies

- Generated CustomResourceDefinition (CRD)

Building Your Project

Build the generated project with Maven:

mvn clean install

The build process automatically generates the CustomResourceDefinition YAML file that you’ll need to apply to your Kubernetes cluster.

Exploring Sample Operators

The sample-operators directory contains real-world examples demonstrating different JOSDK features and patterns:

Available Samples

- Purpose: Creates NGINX webservers from Custom Resources containing HTML code

- Key Features: Multiple implementation approaches using both low-level APIs and higher-level abstractions

- Good for: Understanding basic operator concepts and API usage patterns

- Purpose: Manages database schemas in MySQL instances

- Key Features: Demonstrates managing non-Kubernetes resources (external systems)

- Good for: Learning how to integrate with external services and manage state outside Kubernetes

- Purpose: Manages Tomcat instances and web applications

- Key Features: Multiple controllers managing related custom resources

- Good for: Understanding complex operators with multiple resource types and relationships

Running the Samples

Prerequisites

The easiest way to try samples is using a local Kubernetes cluster:

Step-by-Step Instructions

Apply the CustomResourceDefinition:

kubectl apply -f target/classes/META-INF/fabric8/[resource-name]-v1.ymlRun the operator:

mvn exec:java -Dexec.mainClass="your.main.ClassName"Or run your main class directly from your IDE.

Create custom resources: The operator will automatically detect and reconcile custom resources when you create them:

kubectl apply -f examples/sample-resource.yaml

Detailed Examples

For comprehensive setup instructions and examples, see:

- MySQL Schema sample README

- Individual sample directories for specific setup requirements

Next Steps

After exploring the samples:

- Review the patterns and best practices guide

- Learn about implementing reconcilers

- Explore dependent resources and workflows for advanced use cases

1.3 - Patterns and best practices

This document describes patterns and best practices for building and running operators, and how to implement them using the Java Operator SDK (JOSDK).

See also best practices in the Operator SDK.

Implementing a Reconciler

Always Reconcile All Resources

Reconciliation can be triggered by events from multiple sources. It might be tempting to check the events and only reconcile the related resource or subset of resources that the controller manages. However, this is considered an anti-pattern for operators.

Why this is problematic:

- Kubernetes’ distributed nature makes it difficult to ensure all events are received

- If your operator misses some events and doesn’t reconcile the complete state, it might operate with incorrect assumptions about the cluster state

- Always reconcile all resources, regardless of the triggering event

JOSDK makes this efficient by providing smart caches to avoid unnecessary Kubernetes API server access and ensuring your reconciler is triggered only when needed.

Since there’s industry consensus on this topic, JOSDK no longer provides event access from Reconciler implementations starting with version 2.

Event Sources and Caching

During reconciliation, best practice is to reconcile all dependent resources managed by the controller. This means comparing the desired state with the actual cluster state.

The Challenge: Reading the actual state directly from the Kubernetes API Server every time would create significant load.

The Solution: Create a watch for dependent resources and cache their latest state using the Informer pattern. In JOSDK, informers are wrapped into EventSource to integrate with the framework’s eventing system via the InformerEventSource class.

How it works:

- New events trigger reconciliation only when the resource is already cached

- Reconciler implementations compare desired state with cached observed state

- If a resource isn’t in cache, it needs to be created

- If actual state doesn’t match desired state, the resource needs updating

Idempotency

Since all resources should be reconciled when your Reconciler is triggered, and reconciliations can be triggered multiple times for any given resource (especially with retry policies), it’s crucial that Reconciler implementations be idempotent.

Idempotency means: The same observed state should always result in exactly the same outcome.

Key implications:

- Operators should generally operate in a stateless fashion

- Since operators usually manage declarative resources, ensuring idempotency is typically straightforward

Synchronous vs Asynchronous Resource Handling

Sometimes your reconciliation logic needs to wait for resources to reach their desired state (e.g., waiting for a Pod to become ready). You can approach this either synchronously or asynchronously.

Asynchronous Approach (Recommended)

Exit the reconciliation logic as soon as the Reconciler determines it cannot complete at this point. This frees resources to process other events.

Requirements: Set up adequate event sources to monitor state changes of all resources the operator waits for. When state changes occur, the Reconciler is triggered again and can finish processing.

Synchronous Approach

Periodically poll resources’ state until they reach the desired state. If done within the reconcile method, this blocks the current thread for potentially long periods.

Recommendation: Use the asynchronous approach for better resource utilization.

Why Use Automatic Retries?

Automatic retries are enabled by default and configurable. While you can deactivate this feature, we advise against it.

Why retries are important:

- Transient network errors: Common in Kubernetes’ distributed environment, easily resolved with retries

- Resource conflicts: When multiple actors modify resources simultaneously, conflicts can be resolved by reconciling again

- Transparency: Automatic retries make error handling completely transparent when successful

Managing State

Thanks to Kubernetes resources’ declarative nature, operators dealing only with Kubernetes resources can operate statelessly. They don’t need to maintain resource state information since it should be possible to rebuild the complete resource state from its representation.

When State Management Becomes Necessary

This stateless approach typically breaks down when dealing with external resources. You might need to track external state or allocated values for future reconciliations. There are multiple options:

Putting state in the primary resource’s status sub-resource. This is a bit more complex that might seem at the first look. Refer to the documentation for further details.

Store state in separate resources designed for this purpose:

- Kubernetes Secret or ConfigMap

- Dedicated Custom Resource with validated structure

Handling Informer Errors and Cache Sync Timeouts

You can configure whether the operator should stop when informer errors occur on startup.

Default Behavior

By default, if there’s a startup error (e.g., the informer lacks permissions to list target resources for primary or secondary resources), the operator stops immediately.

Alternative Configuration

Set the flag to false to start the operator even when some informers fail to start. In this case:

- The operator continuously retries connection with exponential backoff

- This applies both to startup failures and runtime problems

- The operator only stops for fatal errors (currently when a resource cannot be deserialized)

Use case: When watching multiple namespaces, it’s better to start the operator so it can handle other namespaces while resolving permission issues in specific namespaces.

Cache Sync Timeout Impact

The stopOnInformerErrorDuringStartup setting affects cache sync timeout behavior:

- If

true: Operator stops on cache sync timeout - If

false: After timeout, the controller starts reconciling resources even if some event source caches haven’t synced yet

Graceful Shutdown

You can provide sufficient time for the reconciler to process and complete ongoing events before shutting down. Simply set an appropriate duration value for reconciliationTerminationTimeout using ConfigurationServiceOverrider.

final var operator = new Operator(override -> override.withReconciliationTerminationTimeout(Duration.ofSeconds(5)));

2 - Documentation

JOSDK Documentation

This section contains detailed documentation for all Java Operator SDK features and concepts. Whether you’re building your first operator or need advanced configuration options, you’ll find comprehensive guides here.

Core Concepts

- Implementing a Reconciler - The heart of any operator

- Architecture - How JOSDK works under the hood

- Dependent Resources & Workflows - Managing resource relationships

- Configuration - Customizing operator behavior

- Error Handling & Retries - Managing failures gracefully

Advanced Features

- Eventing - Understanding the event-driven model

- Accessing Resources in Caches - How to access resources in caches

- Observability - Monitoring and debugging your operators

- Other Features - Additional capabilities and integrations

Each guide includes practical examples and best practices to help you build robust, production-ready operators.

2.1 - Implementing a reconciler

How Reconciliation Works

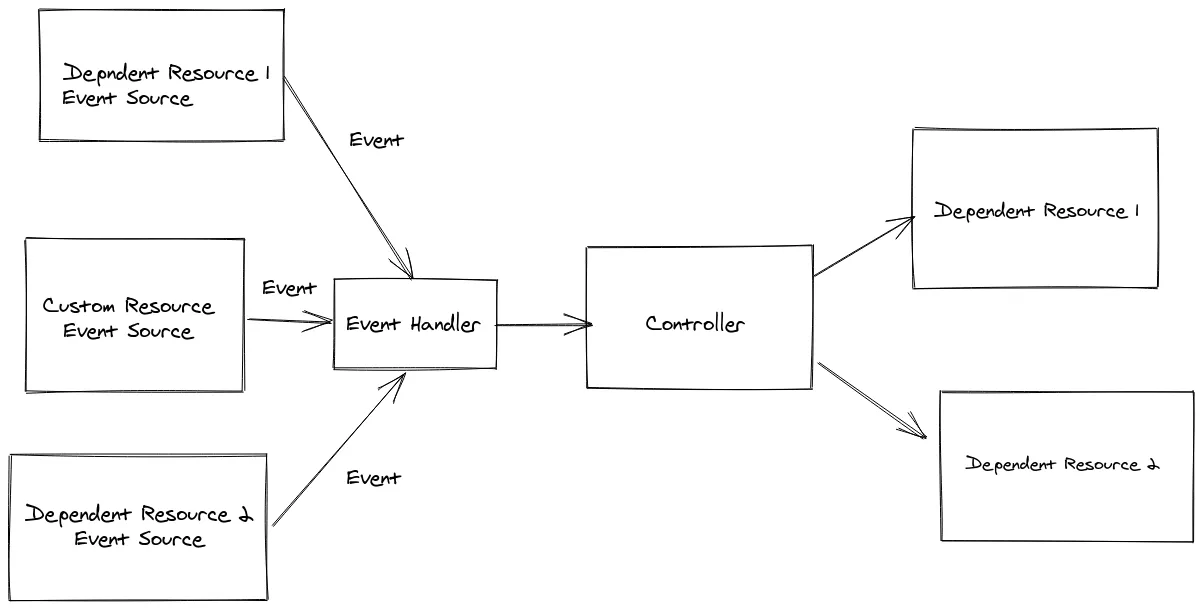

The reconciliation process is event-driven and follows this flow:

Event Reception: Events trigger reconciliation from:

- Primary resources (usually custom resources) when created, updated, or deleted

- Secondary resources through registered event sources

Reconciliation Execution: Each reconciler handles a specific resource type and listens for events from the Kubernetes API server. When an event arrives, it triggers reconciliation unless one is already running for that resource. The framework ensures no concurrent reconciliation occurs for the same resource.

Post-Reconciliation Processing: After reconciliation completes, the framework:

- Schedules a retry if an exception was thrown

- Schedules new reconciliation if events were received during execution

- Schedules a timer event if rescheduling was requested (

UpdateControl.rescheduleAfter(..)) - Finishes reconciliation if none of the above apply

The SDK core implements an event-driven system where events trigger reconciliation requests.

Implementing Reconciler and Cleaner Interfaces

To implement a reconciler, you must implement the Reconciler interface.

A Kubernetes resource lifecycle has two phases depending on whether the resource is marked for deletion:

Normal Phase: The framework calls the reconcile method for regular resource operations.

Deletion Phase: If the resource is marked for deletion and your Reconciler implements the Cleaner interface, only the cleanup method is called. The framework automatically handles finalizers for you.

If you need explicit cleanup logic, always use finalizers. See Finalizer support for details.

Using UpdateControl and DeleteControl

These classes control the behavior after reconciliation completes.

UpdateControl can instruct the framework to:

- Update the status sub-resource

- Reschedule reconciliation with a time delay

@Override

public UpdateControl<MyCustomResource> reconcile(

EventSourceTestCustomResource resource, Context context) {

// omitted code

return UpdateControl.patchStatus(resource).rescheduleAfter(10, TimeUnit.SECONDS);

}

without an update:

@Override

public UpdateControl<MyCustomResource> reconcile(

EventSourceTestCustomResource resource, Context context) {

// omitted code

return UpdateControl.<MyCustomResource>noUpdate().rescheduleAfter(10, TimeUnit.SECONDS);

}

Note, though, that using EventSources is the preferred way of scheduling since the

reconciliation is triggered only when a resource is changed, not on a timely basis.

At the end of the reconciliation, you typically update the status sub-resources.

It is also possible to update both the status and the resource with the patchResourceAndStatus method. In this case,

the resource is updated first followed by the status, using two separate requests to the Kubernetes API.

From v5 UpdateControl only supports patching the resources, by default

using Server Side Apply (SSA).

It is important to understand how SSA works in Kubernetes. Mainly, resources applied using SSA

should contain only the fields identifying the resource and those the user is interested in (a ‘fully specified intent’

in Kubernetes parlance), thus usually using a resource created from scratch, see

sample.

To contrast, see the same sample, this time without SSA.

Non-SSA based patch is still supported.

You can control whether or not to use SSA

using ConfigurationServcice.useSSAToPatchPrimaryResource()

and the related ConfigurationServiceOverrider.withUseSSAToPatchPrimaryResource method.

Related integration test can be

found here.

Handling resources directly using the client, instead of delegating these updates operations to JOSDK by returning

an UpdateControl at the end of your reconciliation, should work appropriately. However, we do recommend to

use UpdateControl instead since JOSDK makes sure that the operations are handled properly, since there are subtleties

to be aware of. For example, if you are using a finalizer, JOSDK makes sure to include it in your fully specified intent

so that it is not unintentionally removed from the resource (which would happen if you omit it, since your controller is

the designated manager for that field and Kubernetes interprets the finalizer being gone from the specified intent as a

request for removal).

DeleteControl

typically instructs the framework to remove the finalizer after the dependent

resource are cleaned up in cleanup implementation.

public DeleteControl cleanup(MyCustomResource customResource,Context context){

// omitted code

return DeleteControl.defaultDelete();

}

However, it is possible to instruct the SDK to not remove the finalizer, this allows to clean up

the resources in a more asynchronous way, mostly for cases when there is a long waiting period

after a delete operation is initiated. Note that in this case you might want to either schedule

a timed event to make sure cleanup is executed again or use event sources to get notified

about the state changes of the deleted resource.

Finalizer Support

Kubernetes finalizers

make sure that your Reconciler gets a chance to act before a resource is actually deleted

after it’s been marked for deletion. Without finalizers, the resource would be deleted directly

by the Kubernetes server.

Depending on your use case, you might or might not need to use finalizers. In particular, if

your operator doesn’t need to clean any state that would not be automatically managed by the

Kubernetes cluster (e.g. external resources), you might not need to use finalizers. You should

use the

Kubernetes garbage collection

mechanism as much as possible by setting owner references for your secondary resources so that

the cluster can automatically delete them for you whenever the associated primary resource is

deleted. Note that setting owner references is the responsibility of the Reconciler

implementation, though dependent resources

make that process easier.

If you do need to clean such a state, you need to use finalizers so that their presence will prevent the Kubernetes server from deleting the resource before your operator is ready to allow it. This allows for clean-up even if your operator was down when the resource was marked for deletion.

JOSDK makes cleaning resources in this fashion easier by taking care of managing finalizers

automatically for you when needed. The only thing you need to do is let the SDK know that your

operator is interested in cleaning the state associated with your primary resources by having it

implement

the Cleaner<P>

interface. If your Reconciler doesn’t implement the Cleaner interface, the SDK will consider

that you don’t need to perform any clean-up when resources are deleted and will, therefore, not activate finalizer support.

In other words, finalizer support is added only if your Reconciler implements the Cleaner interface.

The framework automatically adds finalizers as the first step, thus after a resource is created but before the first reconciliation. The finalizer is added via a separate Kubernetes API call. As a result of this update, the finalizer will then be present on the resource. The reconciliation can then proceed as normal.

The automatically added finalizer will also be removed after the cleanup is executed on

the reconciler. This behavior is customizable as explained

above when we addressed the use of

DeleteControl.

You can specify the name of the finalizer to use for your Reconciler using the

@ControllerConfiguration

annotation. If you do not specify a finalizer name, one will be automatically generated for you.

From v5, by default, the finalizer is added using Server Side Apply. See also UpdateControl in docs.

Making sure the primary resource is up to date for the next reconciliation

It is typical to want to update the status subresource with the information that is available during the reconciliation. This is sometimes referred to as the last observed state. When the primary resource is updated, though, the framework does not cache the resource directly, relying instead on the propagation of the update to the underlying informer’s cache. It can, therefore, happen that, if other events trigger other reconciliations, before the informer cache gets updated, your reconciler does not see the latest version of the primary resource. While this might not typically be a problem in most cases, as caches eventually become consistent, depending on your reconciliation logic, you might still require the latest status version possible, for example, if the status subresource is used to store allocated values. See Representing Allocated Values from the Kubernetes docs for more details.

The framework provides thePrimaryUpdateAndCacheUtils utility class

to help with these use cases.

This class’ methods use internal caches in combination with update methods that leveraging optimistic locking. If the update method fails on optimistic locking, it will retry using a fresh resource from the server as base for modification.

@Override

public UpdateControl<StatusPatchCacheCustomResource> reconcile(

StatusPatchCacheCustomResource resource, Context<StatusPatchCacheCustomResource> context) {

// omitted logic

// update with SSA requires a fresh copy

var freshCopy = createFreshCopy(primary);

freshCopy.getStatus().setValue(statusWithState());

var updatedResource = PrimaryUpdateAndCacheUtils.ssaPatchStatusAndCacheResource(resource, freshCopy, context);

// the resource was updated transparently via the utils, no further action is required via UpdateControl in this case

return UpdateControl.noUpdate();

}

After the update PrimaryUpdateAndCacheUtils.ssaPatchStatusAndCacheResource puts the result of the update into an internal

cache and the framework will make sure that the next reconciliation contains the most recent version of the resource.

Note that it is not necessarily the same version returned as response from the update, it can be a newer version since other parties

can do additional updates meanwhile. However, unless it has been explicitly modified, that

resource will contain the up-to-date status.

Note that you can also perform additional updates after the PrimaryUpdateAndCacheUtils.*PatchStatusAndCacheResource is

called, either by calling any of the PrimeUpdateAndCacheUtils methods again or via UpdateControl. Using

PrimaryUpdateAndCacheUtils guarantees that the next reconciliation will see a resource state no older than the version

updated via PrimaryUpdateAndCacheUtils.

See related integration test here.

Trigger reconciliation for all events

TLDR; We provide an execution mode where reconcile method is called on every event from event source.

The framework optimizes execution for generic use cases, which, in almost all cases, fall into two categories:

- The controller does not use finalizers; thus when the primary resource is deleted, all the managed secondary resources are cleaned up using the Kubernetes garbage collection mechanism, a.k.a., using owner references. This mechanism, however, only works when all secondary resources are Kubernetes resources in the same namespace as the primary resource.

- The controller uses finalizers (the controller implements the

Cleanerinterface), when explicit cleanup logic is required, typically for external resources and when secondary resources are in different namespace than the primary resources (owner references cannot be used in this case).

Note that neither of those cases trigger the reconcile method of the controller on the Delete event of the primary

resource. When a finalizer is used, the SDK calls the cleanup method of the Cleaner implementation when the resource

is marked for deletion and the finalizer specified by the controller is present on the primary resource. When there is

no finalizer, there is no need to call the reconcile method on a Delete event since all the cleanup will be done by

the garbage collector. This avoids reconciliation cycles.

However, there are cases when controllers do not strictly follow those patterns, typically when:

- Only some of the primary resources use finalizers, e.g., for some of the primary resources you need to create an external resource for others not.

- You maintain some additional in memory caches (so not all the caches are encapsulated by an

EventSource) and you don’t want to use finalizers. For those cases, you typically want to clean up your caches when the primary resource is deleted.

For such use cases you can set triggerReconcilerOnAllEvents

to true, as a result, the reconcile method will be triggered on ALL events (so also Delete events), making it

possible to support the above use cases.

In this mode:

- even if the primary resource is already deleted from the Informer’s cache, we will still pass the last known state

as the parameter for the reconciler. You can check if the resource is deleted using

Context.isPrimaryResourceDeleted(). - The retry, rate limiting, re-schedule, filters mechanisms work normally. The internal caches related to the resource

are cleaned up only when there is a successful reconciliation after a

Deleteevent was received for the primary resource and reconciliation is not re-scheduled. - you cannot use the

Cleanerinterface. The framework assumes you will explicitly manage the finalizers. To add finalizer you can usePrimeUpdateAndCacheUtils. - you cannot use managed dependent resources since those manage the finalizers and other logic related to the normal execution mode.

See also sample for selectively adding finalizers for resources;

Expectations

Expectations are a pattern to ensure that, during reconciliation, your secondary resources are in a certain state.

For a more detailed explanation see this blogpost.

You can find framework support for this pattern in io.javaoperatorsdk.operator.processing.expectation

package. See also related integration test.

Note that this feature is marked as @Experimental, since based on feedback the API might be improved / changed, but we intend

to support it, later also might be integrated to Dependent Resources and/or Workflows.

The idea is the nutshell, is that you can track your expectations in the expectation manager in the reconciler which has an API that covers the common use cases.

The following sample is the simplified version of the integration test that implements the logic that creates a deployment and sets status message if there are the target three replicas ready:

public class ExpectationReconciler implements Reconciler<ExpectationCustomResource> {

// some code is omitted

private final ExpectationManager<ExpectationCustomResource> expectationManager =

new ExpectationManager<>();

@Override

public UpdateControl<ExpectationCustomResource> reconcile(

ExpectationCustomResource primary, Context<ExpectationCustomResource> context) {

// exiting asap if there is an expectation that is not timed out neither fulfilled yet

if (expectationManager.ongoingExpectationPresent(primary, context)) {

return UpdateControl.noUpdate();

}

var deployment = context.getSecondaryResource(Deployment.class);

if (deployment.isEmpty()) {

createDeployment(primary, context);

expectationManager.setExpectation(

primary, Duration.ofSeconds(timeout), deploymentReadyExpectation());

return UpdateControl.noUpdate();

} else {

// Checks the expectation and removes it once it is fulfilled.

// In your logic you might add a next expectation based on your workflow.

// Expectations have a name, so you can easily distinguish multiple expectations.

var res = expectationManager.checkExpectation("deploymentReadyExpectation", primary, context);

if (res.isFulfilled()) {

return pathchStatusWithMessage(primary, DEPLOYMENT_READY);

} else if (res.isTimedOut()) {

// you might add some other timeout handling here

return pathchStatusWithMessage(primary, DEPLOYMENT_TIMEOUT);

}

}

return UpdateControl.noUpdate();

}

}

2.2 - Error handling and retries

How Automatic Retries Work

JOSDK automatically schedules retries whenever your Reconciler throws an exception. This robust retry mechanism helps handle transient issues like network problems or temporary resource unavailability.

Default Retry Behavior

The default retry implementation covers most typical use cases with exponential backoff:

GenericRetry.defaultLimitedExponentialRetry()

.setInitialInterval(5000) // Start with 5-second delay

.setIntervalMultiplier(1.5D) // Increase delay by 1.5x each retry

.setMaxAttempts(5); // Maximum 5 attempts

Configuration Options

Using the @GradualRetry annotation:

@ControllerConfiguration

@GradualRetry(maxAttempts = 3, initialInterval = 2000)

public class MyReconciler implements Reconciler<MyResource> {

// reconciler implementation

}

Custom retry implementation:

Specify a custom retry class in the @ControllerConfiguration annotation:

@ControllerConfiguration(retry = MyCustomRetry.class)

public class MyReconciler implements Reconciler<MyResource> {

// reconciler implementation

}

Your custom retry class must:

- Provide a no-argument constructor for automatic instantiation

- Optionally implement

AnnotationConfigurablefor configuration from annotations. SeeGenericRetryimplementation for more details.

Accessing Retry Information

The Context object provides retry state information:

@Override

public UpdateControl<MyResource> reconcile(MyResource resource, Context<MyResource> context) {

if (context.isLastAttempt()) {

// Handle final retry attempt differently

resource.getStatus().setErrorMessage("Failed after all retry attempts");

return UpdateControl.patchStatus(resource);

}

// Normal reconciliation logic

// ...

}

Important Retry Behavior Notes

- Retry limits don’t block new events: When retry limits are reached, new reconciliations still occur for new events

- No retry on limit reached: If an error occurs after reaching the retry limit, no additional retries are scheduled until new events arrive

- Event-driven recovery: Fresh events can restart the retry cycle, allowing recovery from previously failed states

A successful execution resets the retry state.

Reconciler Error Handler

In order to facilitate error reporting you can override updateErrorStatus

method in Reconciler:

public class MyReconciler implements Reconciler<WebPage> {

@Override

public ErrorStatusUpdateControl<WebPage> updateErrorStatus(

WebPage resource, Context<WebPage> context, Exception e) {

return handleError(resource, e);

}

}

The updateErrorStatus method is called in case an exception is thrown from the Reconciler. It is

also called even if no retry policy is configured, just after the reconciler execution.

RetryInfo.getAttemptCount() is zero after the first reconciliation attempt, since it is not a

result of a retry (regardless of whether a retry policy is configured).

ErrorStatusUpdateControl tells the SDK what to do and how to perform the status

update on the primary resource, which is always performed as a status sub-resource request. Note that

this update request will also produce an event and result in a reconciliation if the

controller is not generation-aware.

This feature is only available for the reconcile method of the Reconciler interface, since

there should not be updates to resources that have been marked for deletion.

Retry can be skipped in cases of unrecoverable errors:

ErrorStatusUpdateControl.patchStatus(customResource).withNoRetry();

Correctness and Automatic Retries

While it is possible to deactivate automatic retries, this is not desirable unless there is a particular reason.

Errors naturally occur, whether it be transient network errors or conflicts

when a given resource is handled by a Reconciler but modified simultaneously by a user in

a different process. Automatic retries handle these cases nicely and will eventually result in a

successful reconciliation.

Retry, Rescheduling and Event Handling Common Behavior

Retry, reschedule, and standard event processing form a relatively complex system, each of these functionalities interacting with the others. In the following, we describe the interplay of these features:

A successful execution resets a retry and the rescheduled executions that were present before the reconciliation. However, the reconciliation outcome can instruct a new rescheduling (

UpdateControlorDeleteControl).For example, if a reconciliation had previously been rescheduled for after some amount of time, but an event triggered the reconciliation (or cleanup) in the meantime, the scheduled execution would be automatically cancelled, i.e. rescheduling a reconciliation does not guarantee that one will occur precisely at that time; it simply guarantees that a reconciliation will occur at the latest. Of course, it’s always possible to reschedule a new reconciliation at the end of that “automatic” reconciliation.

Similarly, if a retry was scheduled, any event from the cluster triggering a successful execution in the meantime would cancel the scheduled retry (because there’s now no point in retrying something that already succeeded)

In case an exception is thrown, a retry is initiated. However, if an event is received meanwhile, it will be reconciled instantly, and this execution won’t count as a retry attempt.

If the retry limit is reached (so no more automatic retry would happen), but a new event received, the reconciliation will still happen, but won’t reset the retry, and will still be marked as the last attempt in the retry info. The point (1) still holds - thus successful reconciliation will reset the retry - but no retry will happen in case of an error.

The thing to remember when it comes to retrying or rescheduling is that JOSDK tries to avoid unnecessary work. When you reschedule an operation, you instruct JOSDK to perform that operation by the end of the rescheduling delay at the latest. If something occurred on the cluster that triggers that particular operation (reconciliation or cleanup), then JOSDK considers that there’s no point in attempting that operation again at the end of the specified delay since there is no point in doing so anymore. The same idea also applies to retries.

2.3 - Event sources and related topics

Handling Related Events with Event Sources

See also this blog post .

Event sources are a relatively simple yet powerful and extensible concept to trigger controller

executions, usually based on changes to dependent resources. You typically need an event source

when you want your Reconciler to be triggered when something occurs to secondary resources

that might affect the state of your primary resource. This is needed because a given

Reconciler will only listen by default to events affecting the primary resource type it is

configured for. Event sources act as listen to events affecting these secondary resources so

that a reconciliation of the associated primary resource can be triggered when needed. Note that

these secondary resources need not be Kubernetes resources. Typically, when dealing with

non-Kubernetes objects or services, we can extend our operator to handle webhooks or websockets

or to react to any event coming from a service we interact with. This allows for very efficient

controller implementations because reconciliations are then only triggered when something occurs

on resources affecting our primary resources thus doing away with the need to periodically

reschedule reconciliations.

There are few interesting points here:

The CustomResourceEventSource event source is a special one, responsible for handling events

pertaining to changes affecting our primary resources. This EventSource is always registered

for every controller automatically by the SDK. It is important to note that events always relate

to a given primary resource. Concurrency is still handled for you, even in the presence of

EventSource implementations, and the SDK still guarantees that there is no concurrent execution of

the controller for any given primary resource (though, of course, concurrent/parallel executions

of events pertaining to other primary resources still occur as expected).

Caching and Event Sources

Kubernetes resources are handled in a declarative manner. The same also holds true for event

sources. For example, if we define an event source to watch for changes of a Kubernetes Deployment

object using an InformerEventSource, we always receive the whole associated object from the

Kubernetes API. This object might be needed at any point during our reconciliation process and

it’s best to retrieve it from the event source directly when possible instead of fetching it

from the Kubernetes API since the event source guarantees that it will provide the latest

version. Not only that, but many event source implementations also cache resources they handle

so that it’s possible to retrieve the latest version of resources without needing to make any

calls to the Kubernetes API, thus allowing for very efficient controller implementations.

Note after an operator starts, caches are already populated by the time the first reconciliation

is processed for the InformerEventSource implementation. However, this does not necessarily

hold true for all event source implementations (PerResourceEventSource for example). The SDK

provides methods to handle this situation elegantly, allowing you to check if an object is

cached, retrieving it from a provided supplier if not. See

related method

.

Registering Event Sources

To register event sources, your Reconciler has to override the prepareEventSources and return

list of event sources to register. One way to see this in action is

to look at the

WebPage example

(irrelevant details omitted):

import java.util.List;

@ControllerConfiguration

public class WebappReconciler

implements Reconciler<Webapp>, Cleaner<Webapp>, EventSourceInitializer<Webapp> {

// ommitted code

@Override

public List<EventSource<?, Webapp>> prepareEventSources(EventSourceContext<Webapp> context) {

InformerEventSourceConfiguration<Webapp> configuration =

InformerEventSourceConfiguration.from(Deployment.class, Webapp.class)

.withLabelSelector(SELECTOR)

.build();

return List.of(new InformerEventSource<>(configuration, context));

}

}

In the example above an InformerEventSource is configured and registered.

InformerEventSource is one of the bundled EventSource implementations that JOSDK provides to

cover common use cases.

Managing Relation between Primary and Secondary Resources

Event sources let your operator know when a secondary resource has changed and that your

operator might need to reconcile this new information. However, in order to do so, the SDK needs

to somehow retrieve the primary resource associated with which ever secondary resource triggered

the event. In the Webapp example above, when an event occurs on a tracked Deployment, the

SDK needs to be able to identify which Webapp resource is impacted by that change.

Seasoned Kubernetes users already know one way to track this parent-child kind of relationship: using owner references. Indeed, that’s how the SDK deals with this situation by default as well, that is, if your controller properly set owner references on your secondary resources, the SDK will be able to follow that reference back to your primary resource automatically without you having to worry about it.

However, owner references cannot always be used as they are restricted to operating within a single namespace (i.e. you cannot have an owner reference to a resource in a different namespace) and are, by essence, limited to Kubernetes resources so you’re out of luck if your secondary resources live outside of a cluster.

This is why JOSDK provides the SecondaryToPrimaryMapper interface so that you can provide

alternative ways for the SDK to identify which primary resource needs to be reconciled when

something occurs to your secondary resources. We even provide some of these alternatives in the

Mappers

class.

Note that, while a set of ResourceID is returned, this set usually consists only of one

element. It is however possible to return multiple values or even no value at all to cover some

rare corner cases. Returning an empty set means that the mapper considered the secondary

resource event as irrelevant and the SDK will thus not trigger a reconciliation of the primary

resource in that situation.

Adding a SecondaryToPrimaryMapper is typically sufficient when there is a one-to-many relationship

between primary and secondary resources. The secondary resources can be mapped to its primary

owner, and this is enough information to also get these secondary resources from the Context

object that’s passed to your Reconciler.

There are however cases when this isn’t sufficient and you need to provide an explicit mapping

between a primary resource and its associated secondary resources using an implementation of the

PrimaryToSecondaryMapper interface. This is typically needed when there are many-to-one or

many-to-many relationships between primary and secondary resources, e.g. when the primary resource

is referencing secondary resources.

See PrimaryToSecondaryIT

integration test for a sample.

Built-in EventSources

There are multiple event-sources provided out of the box, the following are some more central ones:

InformerEventSource

InformerEventSource

is probably the most important EventSource implementation to know about. When you create an

InformerEventSource, JOSDK will automatically create and register a SharedIndexInformer, a

fabric8 Kubernetes client class, that will listen for events associated with the resource type

you configured your InformerEventSource with. If you want to listen to Kubernetes resource

events, InformerEventSource is probably the only thing you need to use. It’s highly

configurable so you can tune it to your needs. Take a look at

InformerEventSourceConfiguration

and associated classes for more details but some interesting features we can mention here is the

ability to filter events so that you can only get notified for events you care about. A

particularly interesting feature of the InformerEventSource, as opposed to using your own

informer-based listening mechanism is that caches are particularly well optimized preventing

reconciliations from being triggered when not needed and allowing efficient operators to be written.

PerResourcePollingEventSource

PerResourcePollingEventSource is used to poll external APIs, which don’t support webhooks or other event notifications. It extends the abstract ExternalResourceCachingEventSource to support caching. See MySQL Schema sample for usage.

PollingEventSource

PollingEventSource

is similar to PerResourceCachingEventSource except that, contrary to that event source, it

doesn’t poll a specific API separately per resource, but periodically and independently of

actually observed primary resources.

Inbound event sources

SimpleInboundEventSource and CachingInboundEventSource are used to handle incoming events from webhooks and messaging systems.

ControllerResourceEventSource

ControllerResourceEventSource

is a special EventSource implementation that you will never have to deal with directly. It is,

however, at the core of the SDK is automatically added for you: this is the main event source

that listens for changes to your primary resources and triggers your Reconciler when needed.

It features smart caching and is really optimized to minimize Kubernetes API accesses and avoid

triggering unduly your Reconciler.

More on the philosophy of the non Kubernetes API related event source see in issue #729.

InformerEventSource Multi-Cluster Support

It is possible to handle resources for remote cluster with InformerEventSource. To do so,

simply set a client that connects to a remote cluster:

InformerEventSourceConfiguration<WebPage> configuration =

InformerEventSourceConfiguration.from(SecondaryResource.class, PrimaryResource.class)

.withKubernetesClient(remoteClusterClient)

.withSecondaryToPrimaryMapper(Mappers.fromDefaultAnnotations());

You will also need to specify a SecondaryToPrimaryMapper, since the default one

is based on owner references and won’t work across cluster instances. You could, for example, use the provided implementation that relies on annotations added to the secondary resources to identify the associated primary resource.

See related integration test.

Generation Awareness and Event Filtering

A best practice when an operator starts up is to reconcile all the associated resources because changes might have occurred to the resources while the operator was not running.

When this first reconciliation is done successfully, the next reconciliation is triggered if either

dependent resources are changed or the primary resource .spec field is changed. If other fields

like .metadata are changed on the primary resource, the reconciliation could be skipped. This

behavior is supported out of the box and reconciliation is by default not triggered if

changes to the primary resource do not increase the .metadata.generation field.

Note that changes to .metada.generation are automatically handled by Kubernetes.

To turn off this feature, set generationAwareEventProcessing to false for the Reconciler.

Max Interval Between Reconciliations

When informers / event sources are properly set up, and the Reconciler implementation is

correct, no additional reconciliation triggers should be needed. However, it’s

a common practice

to have a failsafe periodic trigger in place, just to make sure resources are nevertheless

reconciled after a certain amount of time. This functionality is in place by default, with a

rather high time interval (currently 10 hours) after which a reconciliation will be

automatically triggered even in the absence of other events. See how to override this using the

standard annotation:

@ControllerConfiguration(maxReconciliationInterval = @MaxReconciliationInterval(

interval = 50,

timeUnit = TimeUnit.MILLISECONDS))

public class MyReconciler implements Reconciler<HasMetadata> {}

The event is not propagated at a fixed rate, rather it’s scheduled after each reconciliation. So the next reconciliation will occur at most within the specified interval after the last reconciliation.

This feature can be turned off by setting maxReconciliationInterval

to Constants.NO_MAX_RECONCILIATION_INTERVAL

or any non-positive number.

The automatic retries are not affected by this feature so a reconciliation will be re-triggered on error, according to the specified retry policy, regardless of this maximum interval setting.

Rate Limiting

It is possible to rate limit reconciliation on a per-resource basis. The rate limit also takes precedence over retry/re-schedule configurations: for example, even if a retry was scheduled for the next second but this request would make the resource go over its rate limit, the next reconciliation will be postponed according to the rate limiting rules. Note that the reconciliation is never cancelled, it will just be executed as early as possible based on rate limitations.

Rate limiting is by default turned off, since correct configuration depends on the reconciler

implementation, in particular, on how long a typical reconciliation takes.

(The parallelism of reconciliation itself can be

limited ConfigurationService

by configuring the ExecutorService appropriately.)

A default rate limiter implementation is provided, see:

PeriodRateLimiter

.

Users can override it by implementing their own

RateLimiter

and specifying this custom implementation using the rateLimiter field of the

@ControllerConfiguration annotation. Similarly to the Retry implementations,

RateLimiter implementations must provide an accessible, no-arg constructor for instantiation

purposes and can further be automatically configured from your own, provided annotation provided

your RateLimiter implementation also implements the AnnotationConfigurable interface,

parameterized by your custom annotation type.

To configure the default rate limiter use the @RateLimited annotation on your

Reconciler class. The following configuration limits each resource to reconcile at most twice

within a 3 second interval:

@RateLimited(maxReconciliations = 2, within = 3, unit = TimeUnit.SECONDS)

@ControllerConfiguration

public class MyReconciler implements Reconciler<MyCR> {

}

Thus, if a given resource was reconciled twice in one second, no further reconciliation for this resource will happen before two seconds have elapsed. Note that, since rate is limited on a per-resource basis, other resources can still be reconciled at the same time, as long, of course, that they stay within their own rate limits.

Optimizing Caches

One of the ideas around the operator pattern is that all the relevant resources are cached, thus reconciliation is usually very fast (especially if no resources are updated in the process) since the operator is then mostly working with in-memory state. However for large clusters, caching huge amount of primary and secondary resources might consume lots of memory. JOSDK provides ways to mitigate this issue and optimize the memory usage of controllers. While these features are working and tested, we need feedback from real production usage.

Bounded Caches for Informers

Limiting caches for informers - thus for Kubernetes resources - is supported by ensuring that resources are in the cache for a limited time, via a cache eviction of least recently used resources. This means that when resources are created and frequently reconciled, they stay “hot” in the cache. However, if, over time, a given resource “cools” down, i.e. it becomes less and less used to the point that it might not be reconciled anymore, it will eventually get evicted from the cache to free up memory. If such an evicted resource were to become reconciled again, the bounded cache implementation would then fetch it from the API server and the “hot/cold” cycle would start anew.

Since all resources need to be reconciled when a controller start, it is not practical to set a maximal cache size as it’s desirable that all resources be cached as soon as possible to make the initial reconciliation process on start as fast and efficient as possible, avoiding undue load on the API server. It’s therefore more interesting to gradually evict cold resources than try to limit cache sizes.

See usage of the related implementation using Caffeine cache in integration tests for primary resources.

See also CaffeineBoundedItemStores for more details.

2.4 - Working with EventSource caches

As described in Event sources and related topics, event sources serve as the backbone for caching resources and triggering reconciliation for primary resources that are related to these secondary resources.

In the Kubernetes ecosystem, the component responsible for this is called an Informer. Without delving into the details (there are plenty of excellent resources online about informers), informers watch resources, cache them, and emit events when resources change.

EventSource is a generalized concept that extends the Informer pattern to non-Kubernetes resources,

allowing you to cache external resources and trigger reconciliation when those resources change.

The InformerEventSource

The underlying informer implementation comes from the Fabric8 client, called DefaultSharedIndexInformer. InformerEventSource in Java Operator SDK wraps the Fabric8 client informers. While this wrapper adds additional capabilities specifically required for controllers, this is the event source that most likely will be used to deal with Kubernetes resources.

These additional capabilities include:

- Maintaining an index that maps secondary resources in the informer cache to their related primary resources

- Setting up multiple informers for the same resource type when needed (for example, you need one informer per namespace if the informer is not watching the entire cluster)

- Dynamically adding and removing watched namespaces

- Other capabilities that are beyond the scope of this document

Associating Secondary Resources to Primary Resource

Event sources need to trigger the appropriate reconciler, providing the correct primary resource, whenever one of their

handled secondary resources changes. It is thus core to an event source’s role to identify which primary resource

(usually, your custom resource) is potentially impacted by that change.

The framework uses SecondaryToPrimaryMapper

for this purpose. For InformerEventSources, which target Kubernetes resources, this mapping is typically done using

either the owner reference or an annotation on the secondary resource. For external resources, other mechanisms need to

be used and there are also cases where the default mechanisms provided by the SDK do not work, even for Kubernetes

resources.

However, once the event source has triggered a primary resource reconciliation, the associated reconciler needs to

access the secondary resources which changes caused the reconciliation. Indeed, the information from the secondary

resources might be needed during the reconciliation. For that purpose,InformerEventSource maintains a reverse

index PrimaryToSecondaryIndex,

based on the result of the SecondaryToPrimaryMapperresult.

Unified API for Related Resources

To access all related resources for a primary resource, the framework provides an API to access the related

secondary resources using the Set<R> getSecondaryResources(Class<R> expectedType) method of the Context object

provided as part of the reconcile method.

For InformerEventSource, this will leverage the associated PrimaryToSecondaryIndex. Resources are then retrieved

from the informer’s cache. Note that since all those steps work on top of indexes, those operations are very fast,

usually O(1).

While we’ve focused mostly on InformerEventSource, this concept can be extended to all EventSources, since

EventSource

actually implements the Set<R> getSecondaryResources(P primary) method that can be called from the Context.

As there can be multiple event sources for the same resource types, things are a little more complex: the union of each event source results is returned.

Getting Resources Directly from Event Sources

Note that nothing prevents you from directly accessing resources in the cache without going through

getSecondaryResources(...):

public class WebPageReconciler implements Reconciler<WebPage> {

InformerEventSource<ConfigMap, WebPage> configMapEventSource;

@Override

public UpdateControl<WebPage> reconcile(WebPage webPage, Context<WebPage> context) {

// accessing resource directly from an event source

var mySecondaryResource = configMapEventSource.get(new ResourceID("name","namespace"));

// details omitted

}

@Override

public List<EventSource<?, WebPage>> prepareEventSources(EventSourceContext<WebPage> context) {

configMapEventSource = new InformerEventSource<>(

InformerEventSourceConfiguration.from(ConfigMap.class, WebPage.class)

.withLabelSelector(SELECTOR)

.build(),

context);

return List.of(configMapEventSource);

}

}

The Use Case for PrimaryToSecondaryMapper

TL;DR: PrimaryToSecondaryMapper allows InformerEventSource to access secondary resources directly

instead of using the PrimaryToSecondaryIndex. When this mapper is configured, InformerEventSource.getSecondaryResources(..)

will call the mapper to retrieve the target secondary resources. This is typically required when the SecondaryToPrimaryMapper

uses informer caches to list the target resources.

As discussed, we provide a unified API to access related resources using Context.getSecondaryResources(...).

The term “Secondary” refers to resources that a reconciler needs to consider when properly reconciling a primary

resource. These resources encompass more than just “child” resources (resources created by a reconciler that

typically have an owner reference pointing to the primary custom resource). They also include

“related” resources (which may or may not be managed by Kubernetes) that serve as input for reconciliations.

In some cases, the SDK needs additional information beyond what’s readily available, particularly when secondary resources lack owner references or any direct link to their associated primary resource.

Consider this example: a Job primary resource can be assigned to run on a cluster, represented by a

Cluster resource.

Multiple jobs can run on the same cluster, so multiple Job resources can reference the same Cluster resource. However,

a Cluster resource shouldn’t know about Job resources, as this information isn’t part of what defines a cluster.

When a cluster changes, though, we might want to redirect associated jobs to other clusters. Our reconciler

therefore needs to determine which Job (primary) resources are associated with the changed Cluster (secondary)

resource.

See full

sample here.

InformerEventSourceConfiguration

.from(Cluster.class, Job.class)

.withSecondaryToPrimaryMapper(cluster ->

context.getPrimaryCache()

.list()

.filter(job -> job.getSpec().getClusterName().equals(cluster.getMetadata().getName()))

.map(ResourceID::fromResource)

.collect(Collectors.toSet()))

This configuration will trigger all related Jobs when the associated cluster changes and maintains the PrimaryToSecondaryIndex,

allowing us to use getSecondaryResources in the Job reconciler to access the cluster.

However, there’s a potential issue: when a new Job is created, it doesn’t automatically propagate

to the PrimaryToSecondaryIndex in the Cluster’s InformerEventSource. Re-indexing only occurs

when a Cluster event is received, which triggers all related Jobs again.

Until this re-indexing happens, you cannot use getSecondaryResources for the new Job, since it

won’t be present in the reverse index.

You can work around this by accessing the Cluster directly from the cache in the reconciler:

@Override

public UpdateControl<Job> reconcile(Job resource, Context<Job> context) {

clusterInformer.get(new ResourceID(job.getSpec().getClusterName(), job.getMetadata().getNamespace()));

// omitted details

}

However, if you prefer to use the unified API (context.getSecondaryResources()), you need to add

a PrimaryToSecondaryMapper:

clusterInformer.withPrimaryToSecondaryMapper( job ->

Set.of(new ResourceID(job.getSpec().getClusterName(), job.getMetadata().getNamespace())));

When using PrimaryToSecondaryMapper, the InformerEventSource bypasses the PrimaryToSecondaryIndex

and instead calls the mapper to retrieve resources based on its results.

In fact, when this mapper is configured, the PrimaryToSecondaryIndex isn’t even initialized.

Using Informer Indexes to Improve Performance

In the SecondaryToPrimaryMapper example above, we iterate through all resources in the cache:

context.getPrimaryCache().list().filter(job -> job.getSpec().getClusterName().equals(cluster.getMetadata().getName()))

This approach can be inefficient when dealing with a large number of primary (Job) resources. To improve performance,

you can create an index in the underlying Informer that indexes the target jobs for each cluster:

@Override

public List<EventSource<?, Job>> prepareEventSources(EventSourceContext<Job> context) {

context.getPrimaryCache()

.addIndexer(JOB_CLUSTER_INDEX,

(job -> List.of(indexKey(job.getSpec().getClusterName(), job.getMetadata().getNamespace()))));

// omitted details

}

where indexKey is a String that uniquely identifies a Cluster:

private String indexKey(String clusterName, String namespace) {

return clusterName + "#" + namespace;

}

With this index in place, you can retrieve the target resources very efficiently:

InformerEventSource<Job,Cluster> clusterInformer =

new InformerEventSource(

InformerEventSourceConfiguration.from(Cluster.class, Job.class)

.withSecondaryToPrimaryMapper(

cluster ->

context

.getPrimaryCache()

.byIndex(

JOB_CLUSTER_INDEX,

indexKey(

cluster.getMetadata().getName(),

cluster.getMetadata().getNamespace()))

.stream()

.map(ResourceID::fromResource)

.collect(Collectors.toSet()))

.withNamespacesInheritedFromController().build(), context);

2.5 - Configurations

The Java Operator SDK (JOSDK) provides abstractions that work great out of the box. However, we recognize that default behavior isn’t always suitable for every use case. Numerous configuration options help you tailor the framework to your specific needs.

Configuration options operate at several levels:

- Operator-level using

ConfigurationService - Reconciler-level using

ControllerConfiguration - DependentResource-level using the

DependentResourceConfiguratorinterface - EventSource-level where some event sources (like

InformerEventSource) need fine-tuning to identify which events trigger the associated reconciler

Operator-Level Configuration

Configuration that impacts the entire operator is performed via the ConfigurationService class. ConfigurationService is an abstract class with different implementations based on which framework flavor you use (e.g., Quarkus Operator SDK replaces the default implementation). Configurations initialize with sensible defaults but can be changed during initialization.

For example, to disable CRD validation on startup and configure leader election:

Operator operator = new Operator( override -> override

.checkingCRDAndValidateLocalModel(false)

.withLeaderElectionConfiguration(new LeaderElectionConfiguration("bar", "barNS")));

Reconciler-Level Configuration

While reconcilers are typically configured using the @ControllerConfiguration annotation, you can also override configuration at runtime when registering the reconciler with the operator. You can either:

- Pass a completely new

ControllerConfigurationinstance - Override specific aspects using a

ControllerConfigurationOverriderConsumer(preferred)

Operator operator;

Reconciler reconciler;

...

operator.register(reconciler, configOverrider ->

configOverrider.withFinalizer("my-nifty-operator/finalizer").withLabelSelector("foo=bar"));

Dynamically Changing Target Namespaces

A controller can be configured to watch a specific set of namespaces in addition of the

namespace in which it is currently deployed or the whole cluster. The framework supports

dynamically changing the list of these namespaces while the operator is running.

When a reconciler is registered, an instance of

RegisteredController

is returned, providing access to the methods allowing users to change watched namespaces as the

operator is running.

A typical scenario would probably involve extracting the list of target namespaces from a

ConfigMap or some other input but this part is out of the scope of the framework since this is

use-case specific. For example, reacting to changes to a ConfigMap would probably involve

registering an associated Informer and then calling the changeNamespaces method on

RegisteredController.

public static void main(String[] args) {

KubernetesClient client = new DefaultKubernetesClient();

Operator operator = new Operator(client);

RegisteredController registeredController = operator.register(new WebPageReconciler(client));

operator.installShutdownHook();

operator.start();

// call registeredController further while operator is running

}

If watched namespaces change for a controller, it might be desirable to propagate these changes to

InformerEventSources associated with the controller. In order to express this,

InformerEventSource implementations interested in following such changes need to be

configured appropriately so that the followControllerNamespaceChanges method returns true:

@ControllerConfiguration

public class MyReconciler implements Reconciler<TestCustomResource> {

@Override

public Map<String, EventSource> prepareEventSources(

EventSourceContext<ChangeNamespaceTestCustomResource> context) {

InformerEventSource<ConfigMap, TestCustomResource> configMapES =

new InformerEventSource<>(InformerEventSourceConfiguration.from(ConfigMap.class, TestCustomResource.class)

.withNamespacesInheritedFromController(context)

.build(), context);

return EventSourceUtils.nameEventSources(configMapES);

}

}

As seen in the above code snippet, the informer will have the initial namespaces inherited from controller, but also will adjust the target namespaces if it changes for the controller.

See also the integration test for this feature.

DependentResource-level configuration

It is possible to define custom annotations to configure custom DependentResource implementations. In order to provide

such a configuration mechanism for your own DependentResource implementations, they must be annotated with the

@Configured annotation. This annotation defines 3 fields that tie everything together:

by, which specifies which annotation class will be used to configure your dependents,with, which specifies the class holding the configuration object for your dependents andconverter, which specifies theConfigurationConverterimplementation in charge of converting the annotation specified by thebyfield into objects of the class specified by thewithfield.

ConfigurationConverter instances implement a single configFrom method, which will receive, as expected, the

annotation instance annotating the dependent resource instance to be configured, but it can also extract information

from the DependentResourceSpec instance associated with the DependentResource class so that metadata from it can be

used in the configuration, as well as the parent ControllerConfiguration, if needed. The role of

ConfigurationConverter implementations is to extract the annotation information, augment it with metadata from the

DependentResourceSpec and the configuration from the parent controller on which the dependent is defined, to finally

create the configuration object that the DependentResource instances will use.

However, one last element is required to finish the configuration process: the target DependentResource class must

implement the ConfiguredDependentResource interface, parameterized with the annotation class defined by the

@Configured annotation by field. This interface is called by the framework to inject the configuration at the

appropriate time and retrieve the configuration, if it’s available.

For example, KubernetesDependentResource, a core implementation that the framework provides, can be configured via the

@KubernetesDependent annotation. This set up is configured as follows:

@Configured(

by = KubernetesDependent.class,

with = KubernetesDependentResourceConfig.class,

converter = KubernetesDependentConverter.class)

public abstract class KubernetesDependentResource<R extends HasMetadata, P extends HasMetadata>

extends AbstractEventSourceHolderDependentResource<R, P, InformerEventSource<R, P>>

implements ConfiguredDependentResource<KubernetesDependentResourceConfig<R>> {

// code omitted

}

The @Configured annotation specifies that KubernetesDependentResource instances can be configured by using the

@KubernetesDependent annotation, which gets converted into a KubernetesDependentResourceConfig object by a

KubernetesDependentConverter. That configuration object is then injected by the framework in the

KubernetesDependentResource instance, after it’s been created, because the class implements the

ConfiguredDependentResource interface, properly parameterized.

For more information on how to use this feature, we recommend looking at how this mechanism is implemented for

KubernetesDependentResource in the core framework, SchemaDependentResource in the samples or CustomAnnotationDep

in the BaseConfigurationServiceTest test class.

EventSource-level configuration

TODO

2.6 - Observability

Runtime Info

RuntimeInfo is used mainly to check the actual health of event sources. Based on this information it is easy to implement custom liveness probes.

stopOnInformerErrorDuringStartup setting, where this flag usually needs to be set to false, in order to control the exact liveness properties.

See also an example implementation in the WebPage sample

Contextual Info for Logging with MDC

Logging is enhanced with additional contextual information using MDC. The following attributes are available in most parts of reconciliation logic and during the execution of the controller:

| MDC Key | Value added from primary resource |

|---|---|

resource.apiVersion | .apiVersion |

resource.kind | .kind |

resource.name | .metadata.name |

resource.namespace | .metadata.namespace |

resource.resourceVersion | .metadata.resourceVersion |

resource.generation | .metadata.generation |

resource.uid | .metadata.uid |

For more information about MDC see this link.

Metrics

JOSDK provides built-in support for metrics reporting on what is happening with your reconcilers in the form of

the Metrics interface which can be implemented to connect to your metrics provider of choice, JOSDK calling the

methods as it goes about reconciling resources. By default, a no-operation implementation is provided thus providing a

no-cost sane default. A micrometer-based implementation is also provided.

You can use a different implementation by overriding the default one provided by the default ConfigurationService, as

follows:

Metrics metrics; // initialize your metrics implementation

Operator operator = new Operator(client, o -> o.withMetrics(metrics));

Micrometer implementation

The micrometer implementation is typically created using one of the provided factory methods which, depending on which is used, will return either a ready to use instance or a builder allowing users to customize how the implementation behaves, in particular when it comes to the granularity of collected metrics. It is, for example, possible to collect metrics on a per-resource basis via tags that are associated with meters. This is the default, historical behavior but this will change in a future version of JOSDK because this dramatically increases the cardinality of metrics, which could lead to performance issues.

To create a MicrometerMetrics implementation that behaves how it has historically behaved, you can just create an

instance via:

MeterRegistry registry; // initialize your registry implementation

Metrics metrics = MicrometerMetrics.newMicrometerMetricsBuilder(registry).build();

The class provides factory methods which either return a fully pre-configured instance or a builder object that will allow you to configure more easily how the instance will behave. You can, for example, configure whether the implementation should collect metrics on a per-resource basis, whether associated meters should be removed when a resource is deleted and how the clean-up is performed. See the relevant classes documentation for more details.

For example, the following will create a MicrometerMetrics instance configured to collect metrics on a per-resource

basis, deleting the associated meters after 5 seconds when a resource is deleted, using up to 2 threads to do so.

MicrometerMetrics.newPerResourceCollectingMicrometerMetricsBuilder(registry)

.withCleanUpDelayInSeconds(5)

.withCleaningThreadNumber(2)

.build();

Operator SDK metrics

The micrometer implementation records the following metrics:

| Meter name | Type | Tag names | Description |

|---|---|---|---|

operator.sdk.reconciliations.executions.<reconciler name> | gauge | group, version, kind | Number of executions of the named reconciler |

operator.sdk.reconciliations.queue.size.<reconciler name> | gauge | group, version, kind | How many resources are queued to get reconciled by named reconciler |

operator.sdk.<map name>.size | gauge map size | Gauge tracking the size of a specified map (currently unused but could be used to monitor caches size) | |

| operator.sdk.events.received | counter | <resource metadata>, event, action | Number of received Kubernetes events |

| operator.sdk.events.delete | counter | <resource metadata> | Number of received Kubernetes delete events |

| operator.sdk.reconciliations.started | counter | <resource metadata>, reconciliations.retries.last, reconciliations.retries.number | Number of started reconciliations per resource type |

| operator.sdk.reconciliations.failed | counter | <resource metadata>, exception | Number of failed reconciliations per resource type |

| operator.sdk.reconciliations.success | counter | <resource metadata> | Number of successful reconciliations per resource type |

| operator.sdk.controllers.execution.reconcile | timer | <resource metadata>, controller | Time taken for reconciliations per controller |

| operator.sdk.controllers.execution.cleanup | timer | <resource metadata>, controller | Time taken for cleanups per controller |

| operator.sdk.controllers.execution.reconcile.success | counter | controller, type | Number of successful reconciliations per controller |

| operator.sdk.controllers.execution.reconcile.failure | counter | controller, exception | Number of failed reconciliations per controller |

| operator.sdk.controllers.execution.cleanup.success | counter | controller, type | Number of successful cleanups per controller |

| operator.sdk.controllers.execution.cleanup.failure | counter | controller, exception | Number of failed cleanups per controller |

As you can see all the recorded metrics start with the operator.sdk prefix. <resource metadata>, in the table above,

refers to resource-specific metadata and depends on the considered metric and how the implementation is configured and

could be summed up as follows: group?, version, kind, [name, namespace?], scope where the tags in square

brackets ([]) won’t be present when per-resource collection is disabled and tags followed by a question mark are

omitted if the associated value is empty. Of note, when in the context of controllers’ execution metrics, these tag

names are prefixed with resource.. This prefix might be removed in a future version for greater consistency.

Aggregated Metrics

The AggregatedMetrics class provides a way to combine multiple metrics providers into a single metrics instance using

the composite pattern. This is particularly useful when you want to simultaneously collect metrics data from different

monitoring systems or providers.

You can create an AggregatedMetrics instance by providing a list of existing metrics implementations:

// create individual metrics instances

Metrics micrometerMetrics = MicrometerMetrics.withoutPerResourceMetrics(registry);

Metrics customMetrics = new MyCustomMetrics();

Metrics loggingMetrics = new LoggingMetrics();

// combine them into a single aggregated instance

Metrics aggregatedMetrics = new AggregatedMetrics(List.of(

micrometerMetrics,

customMetrics,

loggingMetrics

));

// use the aggregated metrics with your operator

Operator operator = new Operator(client, o -> o.withMetrics(aggregatedMetrics));

This approach allows you to easily combine different metrics collection strategies, such as sending metrics to both Prometheus (via Micrometer) and a custom logging system simultaneously.

2.7 - Other Features